It’s no secret that AI is all around us. ChatGPT is present in many students’ assignments, AI ASMR and “choose your bed” videos dominate Instagram Reels, and SMS platforms finish users’ sentences with auto-generated response options.

Especially among its youngest users, concerns about AI being dangerous and “taking over” are often brushed off as fear-mongering and conspiracy theories. However, users should probably take some of these concerns seriously. Most large companies use AI, and some are actively feeding users’ data into it in suspicious ways that most people are unaware of.

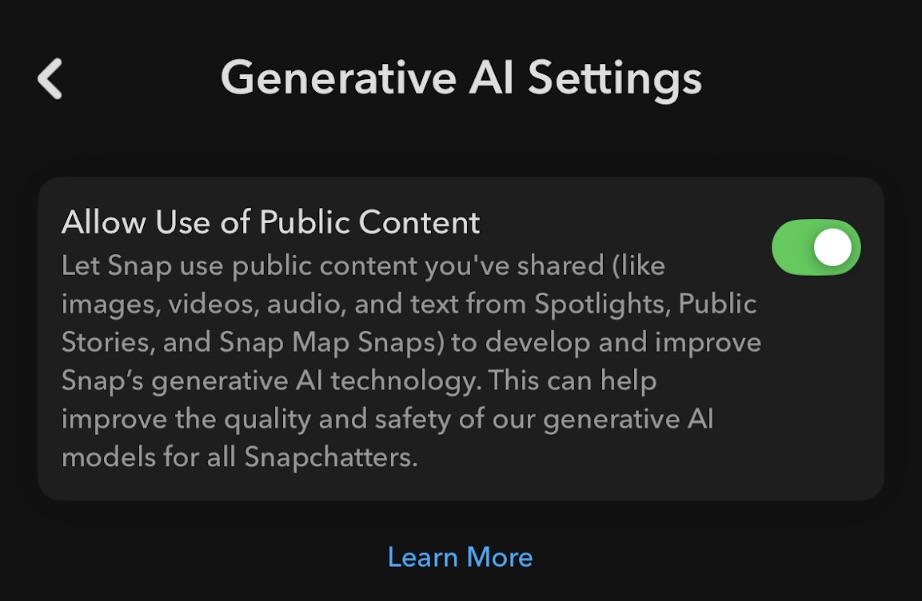

Snapchat, an extremely popular social media platform among teenagers, is a prime example. Upon downloading the app, Snapchat automatically turns on generative AI settings. This means that any content a user has publicly shared, including to Stories, Snap Map Snaps, and Spotlight posts, is being used to train Snapchat’s AI models unless they’ve manually turned the setting off.

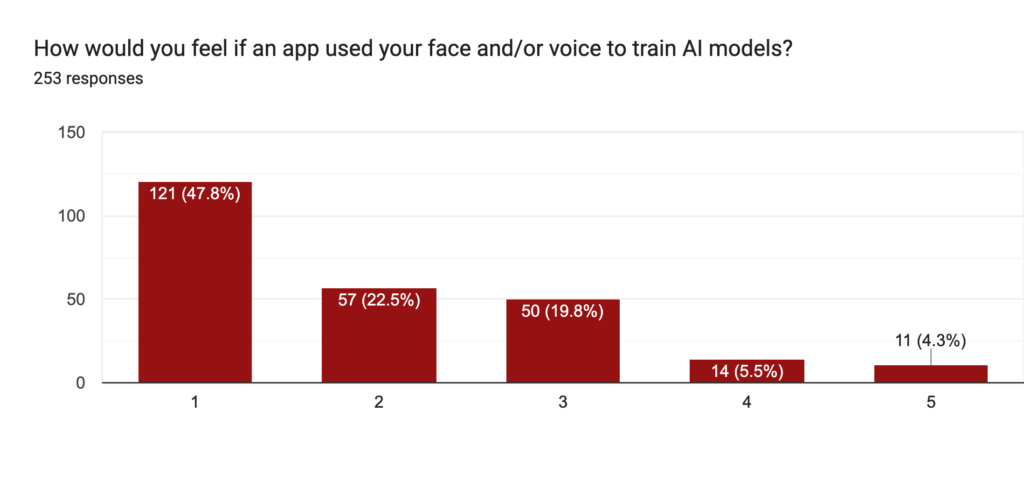

In an M-A Chronicle survey sent to all juniors, 47.6% of students reported feeling very uncomfortable with their face being used to train AI models. Still, 71% reported having the Snapchat app, suggesting that most students are likely unaware of this feature’s capabilities.

A popular trend on the app is birthday posts, where people share photos of their friends on their story to celebrate. As a result, others are likely subjected to the same conditions without their understanding or agreement.

Even if a user opts out, Snapchat won’t erase or stop using any data that has already been shared, according to the company’s terms of service. Additionally, Snapchat still processes public content for “other purposes” even if a user turns off the setting.

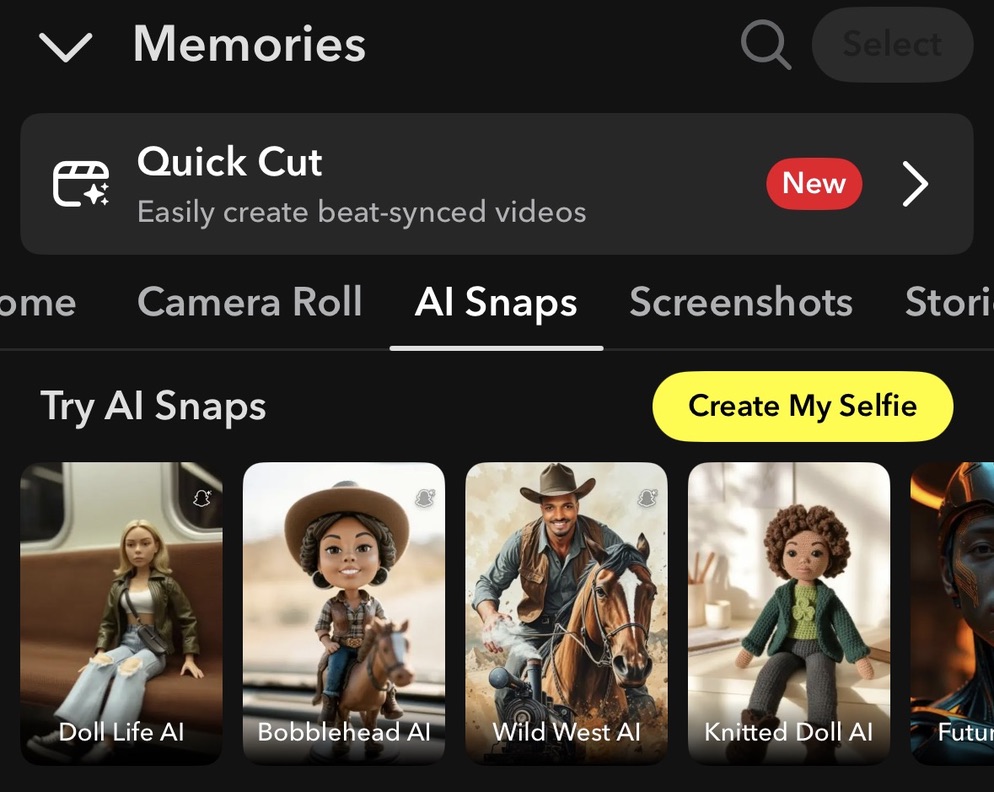

Deeper into the terms of service, Snapchat reveals that using any of the AI “My Selfie” filters also results in users’ faces being used to train AI models. Using the feature could result in a user’s face appearing in ads, likely without further notice or compensation. It gives Snapchat “irrevocable and perpetual” rights to “publicly display all or any portion of generated images of you […] from your MySelfie”.

Even when a feature doesn’t seem unsafe on its face, past leaks and personal preferences can make people more hesitant to submit personal information to AI.

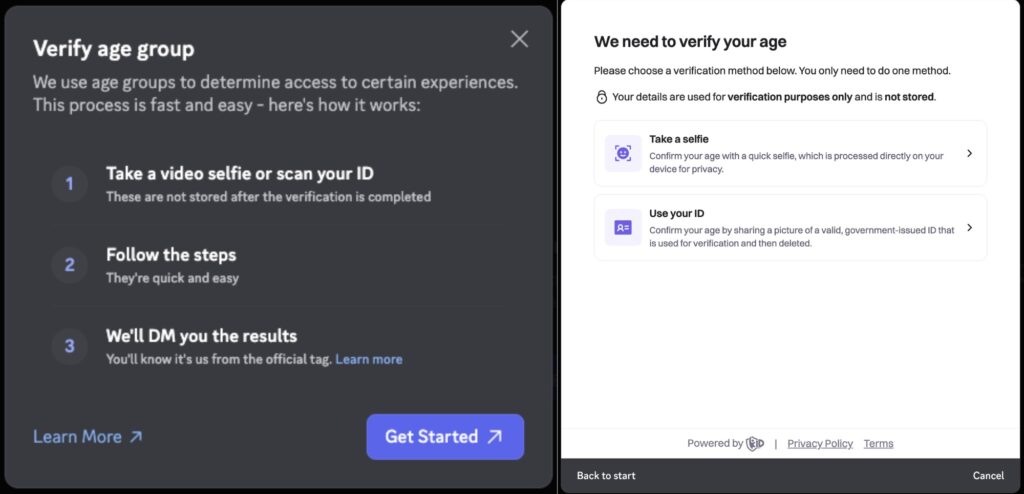

For example, many are reluctant to submit a photo of themselves to Roblox’s new age-check feature, given the platform’s past data breaches and the possibility that their face could be used for undisclosed purposes.

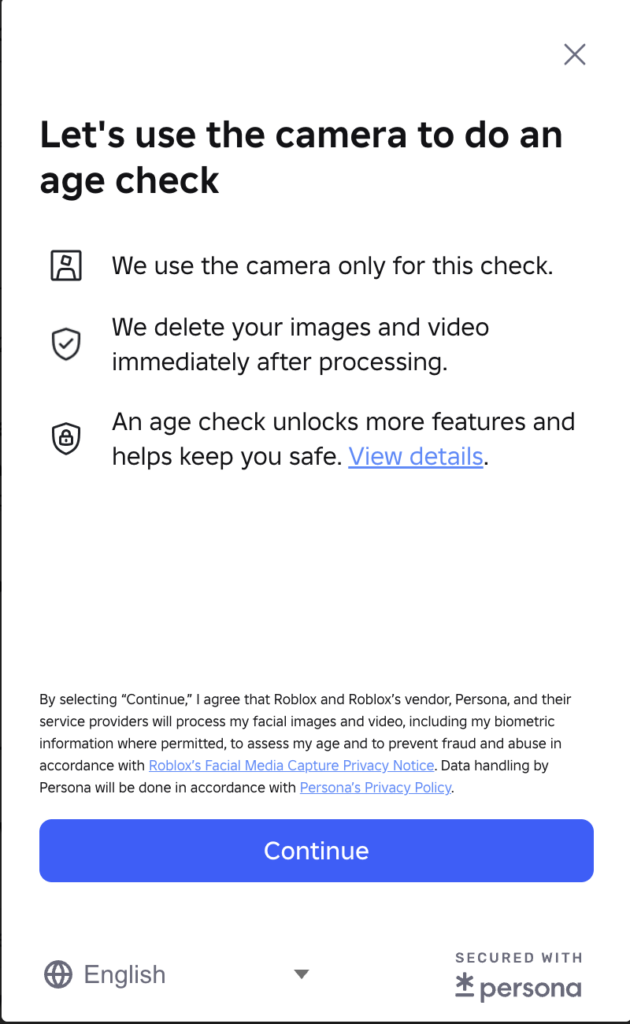

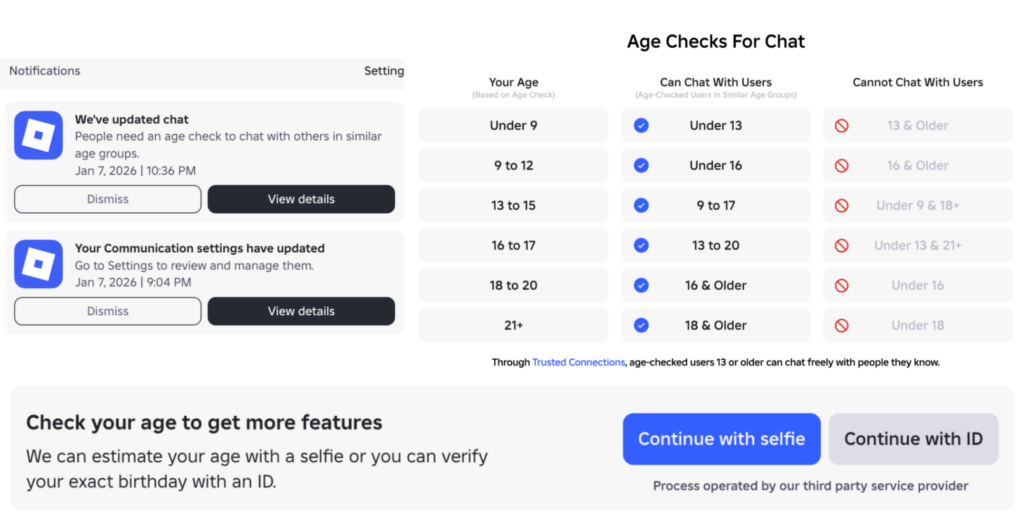

The age check process can be completed by submitting an ID photo or taking a selfie, which is then processed by Persona, an AI-powered service that estimates a user’s age. The update is specifically aimed at improving child safety, following the 132 active sexual exploitation lawsuits as of March 2026.

“It’s suspicious, like, why do they want my photo? There are AI deepfakes, and companies pay other companies for your information, so what if Roblox is gonna sell my photo? What if it gets in the wrong hands, like an AI company, and they make a deepfake of me? That’s not okay,” freshman Kayla Romeyn said.

“It’s really weird, especially knowing that [Roblox is] so quick with the age they give you,” freshman Ella Melendrez said. “It was wrong, because they were saying I’m 16 or 17 years old. It’s like, I don’t want to talk to these older people.”

“It said my age group was over 18, and I’m not over 18, and so I feel like other people will try to cheat to get to the 18+ chats. That’s endangering younger people, and they don’t know what they’ll be exposed to,” Romeyn said.

In its terms of service, Roblox claims to “delete your images and video immediately after processing for facial age estimation.” However, it later states that Persona will delete the photo and biometric information within 30 days unless the legal concerns require longer retention.

“Roblox claimed that they were deleting everything immediately after, but then my estimated age was updated a week later. After chatting with my friends, I found out this also happened to them,” a user wrote on a Roblox developer forum.

Discord tried a similar strategy back in October 2025. Its third-party AI verification system, 5CA, was infiltrated by hackers, leading to the leak of around 70,000 Australian and United Kingdom IDs. For some users, their IP addresses, government-ID images, parts of their billing information, and email addresses were also exposed.

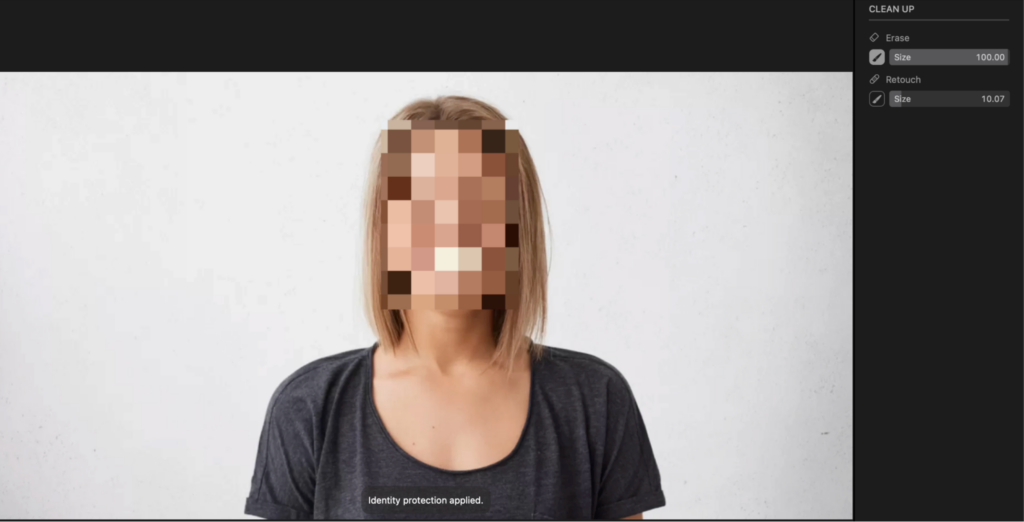

On Apple devices, the photo app offers the “Clean Up” feature, which uses AI to “erase” a selected area. While often used to remove excess items from a background, using this feature to remove a person from a picture can lead to complications.

When selecting a person’s face, Apple automatically converts it to a pixel grid, and a message pops up saying “Identity protection applied.”

Even though the image is processed by Apple’s own AI system, Apple adds privacy measures that could reflect broader security concerns regarding AI and identity theft.

So, maybe being cloned isn’t feasible. Still, it is unsettling that, unless users take steps to remain anonymous, their biometric information could be stored in an AI database and used without their knowledge.