Terrified by the ongoing climate crisis, one Belgian man found comfort in an AI chatbot on ChatGPT named Eliza to soothe his fears, in March 2023. However, unbeknownst to his wife and children, the seemingly innocent conversation quickly grew out of hand. As he became more emotionally involved with Eliza, the man began seeing the chatbot as another human being, trusting it over his own family members. Finally, when the man proposed sacrificing himself to save humanity, the AI didn’t hesitate to encourage him. Soon after, the man took his own life.

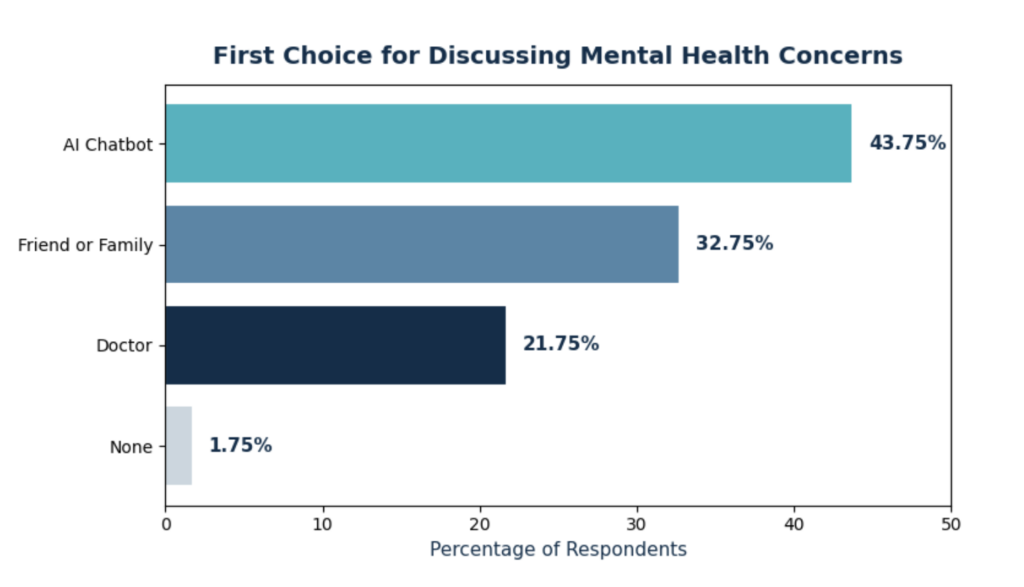

This case is undeniably extreme, but it highlights a threatening concern—as AI grows in prominence, people are turning to it for emotional support, even over humans. In a 2026 survey of adults who have used AI for mental support, 43.75% of Americans interviewed preferred to discuss mental health struggles with AI chatbots, rather than family, friends, or a doctor.

But why is this so concerning? If AI can comfort people, offer advice, and help solve problems, why shouldn’t people use it? The intuitive argument is that AI lacks real emotions, but the real problem goes beyond that.

One of the main reasons people bring their issues to AI is fear of judgment. “Some people might think it’s more secure because you aren’t telling your real feelings to other people,” Kevin* said.

Chatbots don’t criticize or disapprove. Instead, they encourage people to open up about their suppressed problems. This fear of judgment is especially prevalent in teenagers. Based on a survey conducted on adolescents aged 13-18, 9.1% of adolescents had a social anxiety disorder. Due to the stigma surrounding mental health, labeling people as “weak”, people often fear social exclusion and discrimination, leading to an unwillingness to seek help.

However, the fear of judgment drives people to become dependent on AI, creating a sense of isolation that makes access to real therapy more difficult. This can spiral into worse problems and alienate the individual from reality. Yet, therapists and mental health workers are legally bound to confidentiality and rarely express judgment of patients.

“If you have problems, you should talk about it with a real person because AI might be unhealthy, since AI is not real. Also, sometimes you can develop unhealthy mechanisms when you talk to AI and use that as something to trauma dump on and get addicted,” Kevin* said.

AI also creates an illusion of understanding, despite its inability to truly empathize and think rationally, because it is designed to maximize user satisfaction and engagement. Studies have shown that AI agrees with and amplifies human biases and judgements. When a person in need of therapy chats with AI, the bots often agree with self-misdiagnosis and encourage proposed solutions. AI creates a positive feedback loop, an echo chamber where users hear only what they want to hear. It works to provide comfort rather than concrete and sometimes uncomfortable steps.

A phenomenon that has been termed AI psychosis poses a major threat, especially for mentally ill patients. In a 2025 survey in the UK, 11% of people who had used AI for mental health support reported that AI triggered or worsened symptoms of psychosis, such as hallucinations and delusions. By being unconditionally supportive, AI is exacerbating people’s radical ideas and avoiding all moral reasoning to make its users happy.

Most users do not experience such damaging consequences, but the underlying biases still persist. AI’s concerning tendencies can shape anyone’s worldview harmfully over time.

However, many still argue that AI’s accessibility makes it more practical than human interaction. AI is free, available day and night, and accessible within seconds. While accessibility is undeniably important, there are better alternatives. Options like 24/7 mental health hotlines offer real human interaction and are just as easy to access. Specifically at M-A, wellness resources are available to help students who are suffering with mental health issues.

Students believe AI can’t replace human interaction. “I think right now probably a therapist is far superior (…)They’re more human and they’re also trained in therapy,” Steven* said.

While AI may be able to solve simple problems or provide a brief confidence boost, its looming harmful potential outweighs its usefulness. Use AI at your own risk, cautiously, but understand the dangers it presents.

*The name of this interviewee is a pseudonym to protect their confidentiality.