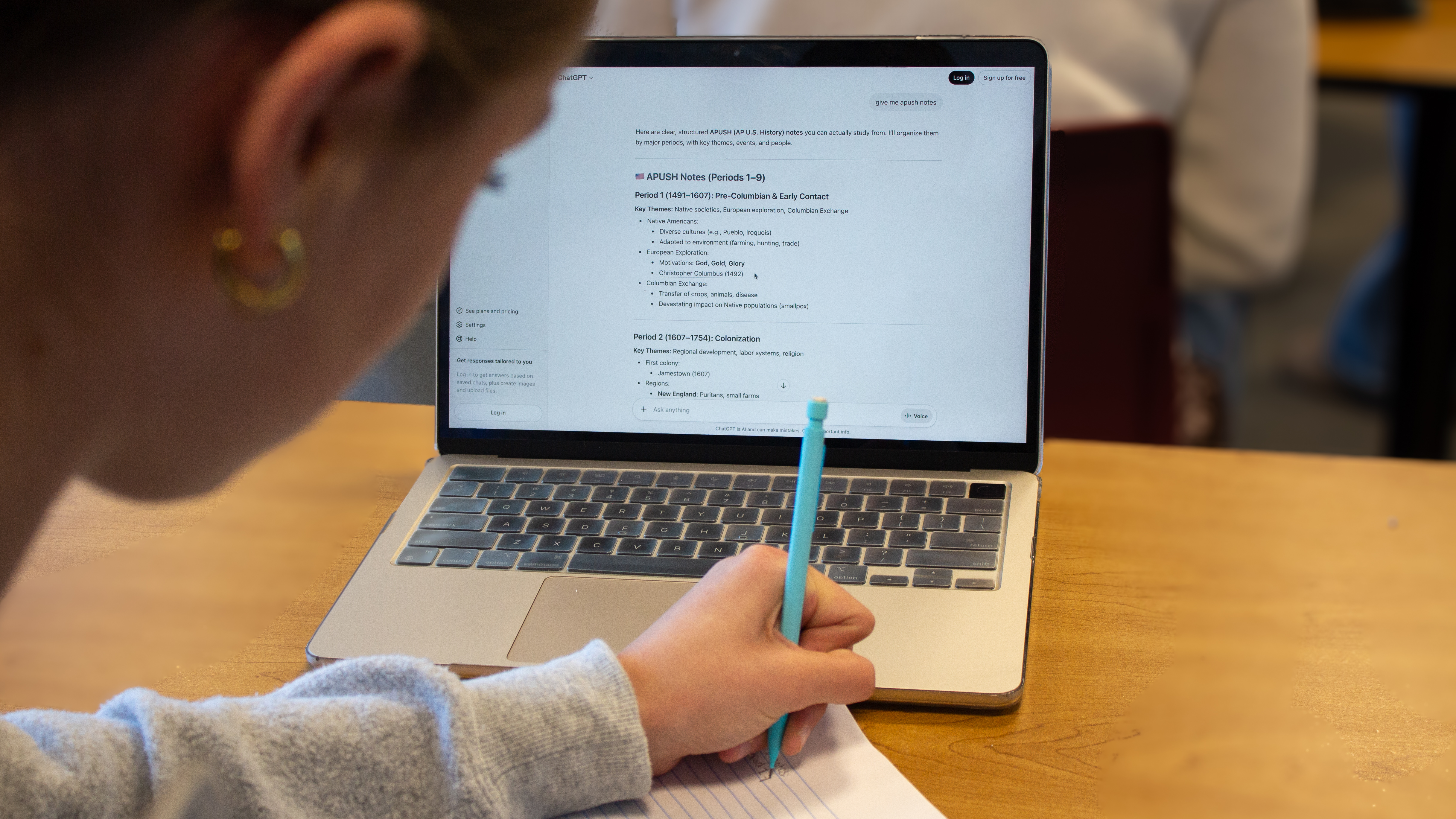

Generative AI like ChatGPT and Google Gemini have undeniably gained popularity as schoolwork tools among students. AI chatbots that can analyze photos of homework and provide answers, or write an essay mimicking a grade-level writing style, enable students to cheat in quick and easily accessible ways.

AI’s rapid integration has normalized its use in students’ minds, yet the District’s policies are less open. The Sequoia Union High School District

Academic Integrity Policy defines “Using AI to create, write, or otherwise generate answers for an assignment without teacher authorization” as a form of cheating. Additionally, “Using AI to create an answer or write something for you” is defined as a form of plagiarism. Would students draw these same lines about AI use?

The M-A Chronicle interviewed nine students about the extent to which they believe using AI in school becomes cheating. They were presented with a series of scenarios, each building on the last with increasing stakes.

1. AI explains what an assignment is asking

Scenario: A student is working on their homework and gets stuck on a reading comprehension question. They don’t understand some of the language or what the question is truly asking, so they copy and paste it into generative AI and ask for clarification or a rephrased version.

Eight of the nine students interviewed did not feel that this case constitutes cheating.

“I don’t think it’s cheating because some people might need more help, and the AI can offer that,” freshman Chloe Cresson said. “It can help people spark creativity, or talk about it in a way they would understand.”

Since the student completes the work themself and only uses AI as a starting point, surveyed students viewed it as extra support, not cheating. “It’s just based on making it easier for you to understand it. You’re doing all the work yourself, just translating it into legible stuff,” sophomore Zach Rasmussen said.

However, some argued that asking AI should be the last resort. “I would probably talk to your teacher, [or] talk to a friend first,” junior Felix Crim said.

2. AI-generated answers to check work

Scenario: After completing an assignment, a student is unsure about some of their answers. Before they turn it in, they ask AI to answer the same questions, and the student uses those answers to check their work.

“If they’re checking their work and already did the work, then that’s fine,” freshman Sofia Visser said. Respondents who didn’t see the situation as cheating noted that since the AI use came after the students’ own work, it is fair play and ultimately helpful in the long run.

“I think it’s good to have confirmation that you were correct, so then you don’t learn the wrong things,” Cresson said.

If the student were to go back and change their answers following the AI’s input, Cresson felt like that would only help them and still wouldn’t constitute cheating. “I think that’s showing that they’re learning, and now they’re going to remember the correct answers instead of the wrong answers, which in the long run, will help them have better knowledge about the subject,” she said.

Others were more hesitant, reasoning that it depends on how much the student relies on the AI responses. “It depends on if they’re changing their answers,” sophomore Lauren Molnar said. “If they’re changing their answers, then possibly [it’s cheating]. If they’re doing it to get a better understanding, then no.”

“If you change your answers after, did the fact that you already did the work really matter? If you did the work, but then you change it based on the answers the AI gave you, that does feel like you’re not using your own work,” Crim said.

“It depends on the case, if they’re doing the full assignment with full work, and then trying to double-check their answers with ChatGPT, seeing if they’re correct, if no answer key is provided, then checking with their teacher, sure,” senior Crystal Winikoff said. “If they’re using it to haphazardly do the work in a non-satisfactory manner, so that they can give themselves the permission to check it with ChatGPT and then correct it by just writing down ChatGPT’s response, [then it is cheating].”

3. AI answers some parts of an assignment

Scenario: As a student completes an assignment, they periodically have AI answer some questions or generate some parts needed to complete it.

For this circumstance, respondents’ answers varied. Five felt that it was cheating, while others believed that the scenario treads a fine line, but as long as the student is still learning, it’s not cheating.

“I think that’s generally okay because it’s helping them learn things that they obviously didn’t understand, but I think then you would have to be a little bit more careful to make sure that you’re still learning from what the AI says, and you’re not just copying and pasting,” Cresson said.

“I don’t think it should be allowed because you’re trying to shortchange work by asking an LMM [Large Multimodal Model] to do work for you,” Winikoff said. “It will screw you over as a student, it will negatively impact your performance in class, so I would say, honestly, if you’re doing that and you do badly, [it’s] your own fault. I think that it’s not cheating, it just shouldn’t be condoned.”

4. AI completes an entire assignment

Scenario: A student copies all their homework questions from AI-generated answers, or has AI plan and generate the content of their slideshow before a presentation.

The majority of students felt it was cheating, while one respondent felt it could still be okay if the student really needed help, or if the teacher didn’t have strict policies.

“It depends on the length of the assignment, but I would say if they’re struggling, no,” freshman Issam Naji said. “If they get caught cheating and get in trouble, then they shouldn’t use AI, but if the teacher doesn’t mind, it’s fine.”

“Yes [it is cheating], because you’re not doing the assignment on your own, and most of the reasons for assignments is so that you’re improving and you’re learning the material better,” senior Ash Rancourt said.

For many scenarios, students’ opinions on whether they were cheating came down to the teacher’s policies. “If it’s in the syllabus and they say, ‘Yes, you can use AI’ distinctly, then yes, it’s okay. But if they were to say, ‘AI is okay’ offhandedly, don’t do it,” Rasmussen said.

5. AI completes a non-graded test/essay/project

Scenario: A student is taking an online quiz that doesn’t count toward their grade and uses AI to fill it out. Another student is in class, and their teacher asks them to write an essay as practice that won’t actually be graded, so they have AI write it.

The students were torn—some argued that since non-graded assessments still test a student’s learning, it would be against the rules. Others thought that since it wouldn’t be graded, it couldn’t be cheating.

“I’d say no, because you’re not getting points, but I feel like that’s kind of destroying the point,” Crim said. “I feel like it’s not cheating because you’re not going to get rewarded for work you didn’t do, but I feel like you are missing the point. If it’s not for points, it’s because they want you to practice, so I think it’s dishonest, but it’s not cheating.”

Cresson believes that, while not necessarily cheating, it still harms the student. “I think that might be more of a red flag for you that you need to learn, but I think it’s okay if that’s what you really need to finish the assignment,” she said.

Similarly, Molnar felt that it was unfair to both the teacher and the student. “If the teacher sees it, then yes. Also, you’re cheating yourself. If only that person sees it, then it’s not cheating, but that’s not good,” she said.

“If it’s still seen by the teacher, you have to put effort into it. I mean, it would be entirely making yourself seem obsolete, and also it would make you look bad to the teacher,” Rasmussen said.

6. AI completes an entire graded test/essay/project

Scenario: During a test, a student asks AI for the correct answers or uses it for every step of a project.

Eight of the nine students interviewed drew the line hard here, feeling that if anything was cheating, it was this.

Many pointed out that if a student needed to use it on a test, the AI is no longer a tool to support, but a cheat sheet. “I think that would be cheating, because at that point, since it’s graded, you should already know the content and the curriculum. If you have to use AI to remember the things that you already learned, then that’s bad, and that is cheating,” Cresson said.

“If you’re cheating on an entire graded test, and you’re using AI [to do it], that’s a real problem, and you’re not understanding any of the material,” Rancourt said. “But also, every school has an anti-cheating policy, whether it’s with AI or whether it’s ‘you are trying to look at someone else’s answers’ because they’re just trying to make sure your education is good and you’re learning what you have to. So if you are using AI on an assignment, you’re not doing it, and it’s completely cheating.”

One student still thought this was a gray area. If the student were using AI on an essay or project, but only as inspiration, then it might not be cheating. “It depends, if they’re brainstorming ideas, like if you’re asking it for examples for claims, then it’s fine,” Naji said.

Overall, students prioritized learning and growth as the most important outcomes. As long as AI was still helping them, not replacing them, then they generally didn’t consider it cheating.

“I think it’s mostly playing it by ear on a lot of these things. Some teachers will be okay with it, most of them won’t be. You’ve got to use context clues, you’ve got to make sure that you know you are doing the right thing,” Rasmussen said.